Have you ever wanted to run your own local version of popular chatbots like ChatGPT, Llama 2 or others completely offline on your own computer? Well, an open-source tool called Jan AI makes this incredibly easy to do, and best of all – it’s 100% free.

What is Jan AI?

Jan AI is an open-source platform that allows you to download, install, and run various conversational AI models and chatbots locally on your own computer.

Some key things to know about Jan AI:

- Completely free and open-source under the AGPLv3 license

- Works on Windows, Mac (including M1/M2 chips), and Linux

- Lets you run popular chatbot models like Google’s LaMDA, Meta’s Blenderbot, etc. offline

- Gives you full control and privacy since all data stays local

- Actively developed on GitHub

Why Run Chatbots Locally?

Here are some of the key benefits of running chatbots locally with a tool like Jan AI:

- Privacy – No data, messages, or information gets sent out over the internet when running models offline. Everything stays securely on your local device.

- Control – You decide what models to install, how they operate, and all policies around their use. No reliance on external services.

- Cost – It’s 100% free and open source. No per-message fees like scaled services. The only cost is your local compute resources.

- Customization – Open source means full flexibility to modify, tune, or alter the tool and models as desired.

So if privacy, control, costs, and customization are important – running chatbots locally is very appealing!

Getting Started with Jan AI

Getting started using Jan AI to run chatbots locally is simple. Just follow these steps:

1. Download Jan AI

Go to jan.ai and download the version compatible with your operating system – Windows, Mac, or Linux.

The installation takes just a minute or two. Then you’ll have access to the Jan AI interface locally on your device.

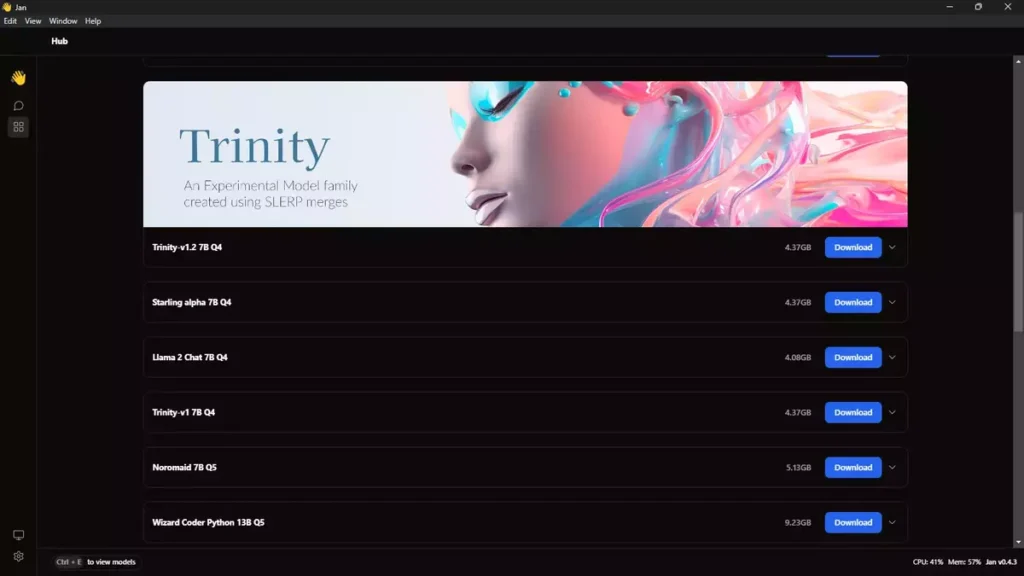

2. Browse Available Models

In the Jan AI interface you can browse and download a variety of conversational AI models and chatbots to install locally.

Some of the featured open source models available include:

- Mistral Instruct 7B Q4

- OpenHermes Neural 7B Q4

- Trinity-v1.2 7B Q4

- Starling alpha 7B Q4

- Llama 2 Chat 7B Q4

- Trinity-v1 7B Q4

- Noromaid 7B Q5

- Wizard Coder Python 13B Q5

- Pandora 11B Q4

- Solar Slerp 10.7B Q4

- Capybara 200k 34B Q5

- Phind 34B Q5

- Yi 34B Q5

- Deepseek Coder 33B Q5

- Mixtral 8x7B Instruct Q4

- Llama 2 Chat 70B Q4

- Lzlv 70B Q4

- Deepseek Coder 1.3B Q8

- OpenAI GPT 3.5 Turbo – API is Required

- OpenAI GPT 3.5 Turbo 16k 0613 – API is Required

- OpenAI GPT 4 – API is Required

- TinyLlama Chat 1.1B Q4

- Phi-2 3B Q4

I’ll recommend you to use Mixtral 8x7B Instruct Q4 because it uses eight different 7 billion parameter expert models. In Short, It’s the Best one yet!

Jan AI also offers proprietary models like GPT-3 and GPT-4 which require an API key for access. We’ll focus on the free, open-source models for this guide.

3. Install Desired Models

Browse the available models inside Jan AI and click install on any you want to use locally.

The downloads are large (5-15 GB each) but only take a few minutes on a decent internet connection.

Once installed, the models are ready to use completely offline!

4. Chat Away!

With models installed, go to the chat interface in Jan AI to start conversing.

Pick your desired model from the dropdown and start asking questions to have organic, free-flowing conversations!

Key Capabilities and Customizations

Jan AI makes running chatbots locally simple. But it also offers quite a few powerful customizations and capabilities to unlock the full potential.

Multiple Models

One of the best parts of Jan AI is the ability to install and switch between multiple different conversational models seamlessly.

You can install LaMDA 7B, Mistol 8X 7B, and more. Then dynamically change models to test differences in capabilities and outputs.

Being able to compare multiple models offline is an awesome capability unique to local solutions like Jan AI.

Adjust Model Parameters

Dial in model performance by tweaking key parameters like:

- Temperature

- Top K sampling

- Top P sampling

- Frequency penalty

- Presence penalty

This lets you customize model behavior to prefer more creative vs more coherent outputs for example.

Input Custom Instructions

Guide model performance by providing custom instructions on intended use cases, priorities around outputs, and more.

For example, you can provide guidelines like:

- Be helpful, harmless, and honest

- Don’t make up facts

- Correct itself if it realizes it made a mistake

This allows you to better shape the conversational experience.

Utilize Chat History

Jan AI stores chat history and context by default. So models can reference previous statements in a conversation to stay contextually relevant – much like actual human conversations.

You can clear history at any point for isolated questions. But the default behavior to utilize context is ideal for most real-world use cases.

Control Compute Resources

Running complex models locally demands significant computing resources in terms of CPU and GPU horsepower.

Jan AI allows granular controls over resource utilization. By default models will leverage all available resources to maximize performance.

But you can also customize to constrain model compute usage if you want to free up system resources for other tasks simultaneously.

Jan AI Use Cases

With the level of capability and customization Jan AI enables, what are some of the ideal real-world uses cases?

Personal Assistant

One of the most practical uses is as a personal assistant that helps with everyday tasks and questions across a wide range of domains.

You can ask for help composing emails, getting movie or dinner recommendations, solving math problems, proofreading essays, and anything else that comes up day-to-day!

Data Analysis & Research

The ability to ingest and process information on many specialized topics also makes Jan AI great for analyzing data and conducting research.

Whether you need help parsing scientific papers, making sense of financial reports, or getting quick answers to research questions – Jan AI can serve as a knowledgeable assistant.

Software Development

For developers and coders, Jan AI offers tons of potential as well.

It can help diagnose bugs, suggest solutions for errors, provide code examples across dozens of programming languages, clarify complex documentation, and much more.

Having an AI pair programmer built right into your IDE via Jan AI integrations can seriously boost productivity!

Content Creation

Jan AI can even function as a writing assistant to help with content creation.

It’s actually how this very blog post was composed! The model generated an initial outline and draft which I then reviewed, edited to my voice, and expanded upon.

So if you need help brainstorming ideas, translating thoughts into words, or proofreading written works – let Jan AI lend a hand.

And Everything In Between!

Whether you need:

- A study buddy to help retain info

- A gaming clan member to team up with

- Design inspiration for creative projects

- Feedback for practicing public speaking

- Emotional support during tough times

…Jan AI aims to handle it all!

The use cases are nearly endless given the remarkable breadth of knowledge across topics and unique human-like nature of conversations Jan AI enables completely offline.

Jan AI Future Roadmap

As amazing as Jan AI already is, the team has bold plans to make it even more capable.

Here’s a peek at what’s in store for future releases:

Versioning System

Allow locking in specific stable versions of models and Jan AI rather than auto-updating to ensure continuity.

M1/M2 Optimizations

Improved performance on M1 and M2 Mac chips by leveraging neural engine and ensuring full compatibility.

Multi-Device Sync

Sync user data like models, personas, conversations etc across desktop and mobile apps.

Mobile Apps

Native iOS and Android Jan AI apps to allow mobile access to models on the go.

API Access

API access to leverage Jan AI capabilities for developers building custom integrations into other software.

Third-Party Model Integration

Support for additional proprietary models like GPT-4 via tighter integration with official APIs.

As you can see, Jan AI has ambitious plans to push the boundaries of what’s possible when running chatbots locally.

The project maintains incredible momentum as well thanks to its open source nature and passionate community developer contributors.

Frequently Asked Questions – FAQs

It is an open-source platform enabling users to run chatbots, including ChatGPT, locally and offline.

Visit jan.ai, download the version for your OS, and follow the simple installation steps.

No, It is completely free to use, offering an open-source solution for running chatbots.

Yes, It allows customization of model parameters, input instructions, and more to tailor the chatbot experience.

This tool serves as a personal assistant, aids in data analysis, supports software development, enhances content creation, and more.

The roadmap includes versioning, M1/M2 optimizations, multi-device sync, mobile apps, API access, and third-party model integration.

Get Started With Jan AI Today!

Now that you’ve seen the tremendous potential enabled by Jan AI for running conversational AI models completely offline, it’s time to experience it yourself!

Recap of why you should give Jan AI a try:

🤖 Open source access to the latest chatbot capabilities for free

🔒 Total data privacy with everything running locally

⚙️ Full control to customize models as needed

💬 Natural conversations supporting tons of practical use cases

Ready to get started?

Simply go to jan.ai and follow the quick install instructions for your operating system.

Within minutes you’ll unlock the full power of open source AI — like having your own localized version of ChatGPT!

Browse the model hub, install a few that interest you, provide any custom instructions, and let the chatting commence!

We’d love to hear what creative ways you end up utilizing Jan AI and the awesome open source models available. The possibilities are endless.

So what are you waiting for? Go satisfy your curiosity by downloading Jan AI to start running incredible chatbots completely offline today!